Managed Kubernetes Services Compared: GKE vs. EKS vs. AKS

Comparing the three most popular managed Kubernetes platforms in features and overall experience

Bharat Arimilli Follow Sep 14, 2020 · 8 min read

Photo by Ian Battaglia on Unsplash

Photo by Ian Battaglia on Unsplash

As Kubernetes becomes the de-facto solution for container orchestration, managed Kubernetes services have popped up everywhere, with cloud providers investing significant effort into their offerings. However, choosing a service often means considering a multitude of factors that are hard to compare without extensive research.

Let’s look at Amazon Elastic Kubernetes Service (EKS), Google Kubernetes Engine (GKE), and Azure Kubernetes Service (AKS) and compare their features, as well as their overall experience.

Note: These services tend to evolve very quickly, so some of these details may be outdated by the time you read them.

Introduction

While most managed Kubernetes services have been around for fewer than three years, one offering was well ahead of the curve. Given that Kubernetes was originally developed at Google, it’s no surprise that Google Kubernetes Engine predates its competitors by three years, being released in 2015. Its largest competitors, AKS and EKS, both launched in 2018, giving GKE a massive head-start that is still noticeable in the platform’s maturity and feature support.

Azure Kubernetes Service and Amazon Elastic Kubernetes Service were both released in 2018, at a similar time as most other cloud providers, meaning they both have had the same time to mature and gain features. Despite their relative youth, these platforms have evolved at a rapid pace since their launch.

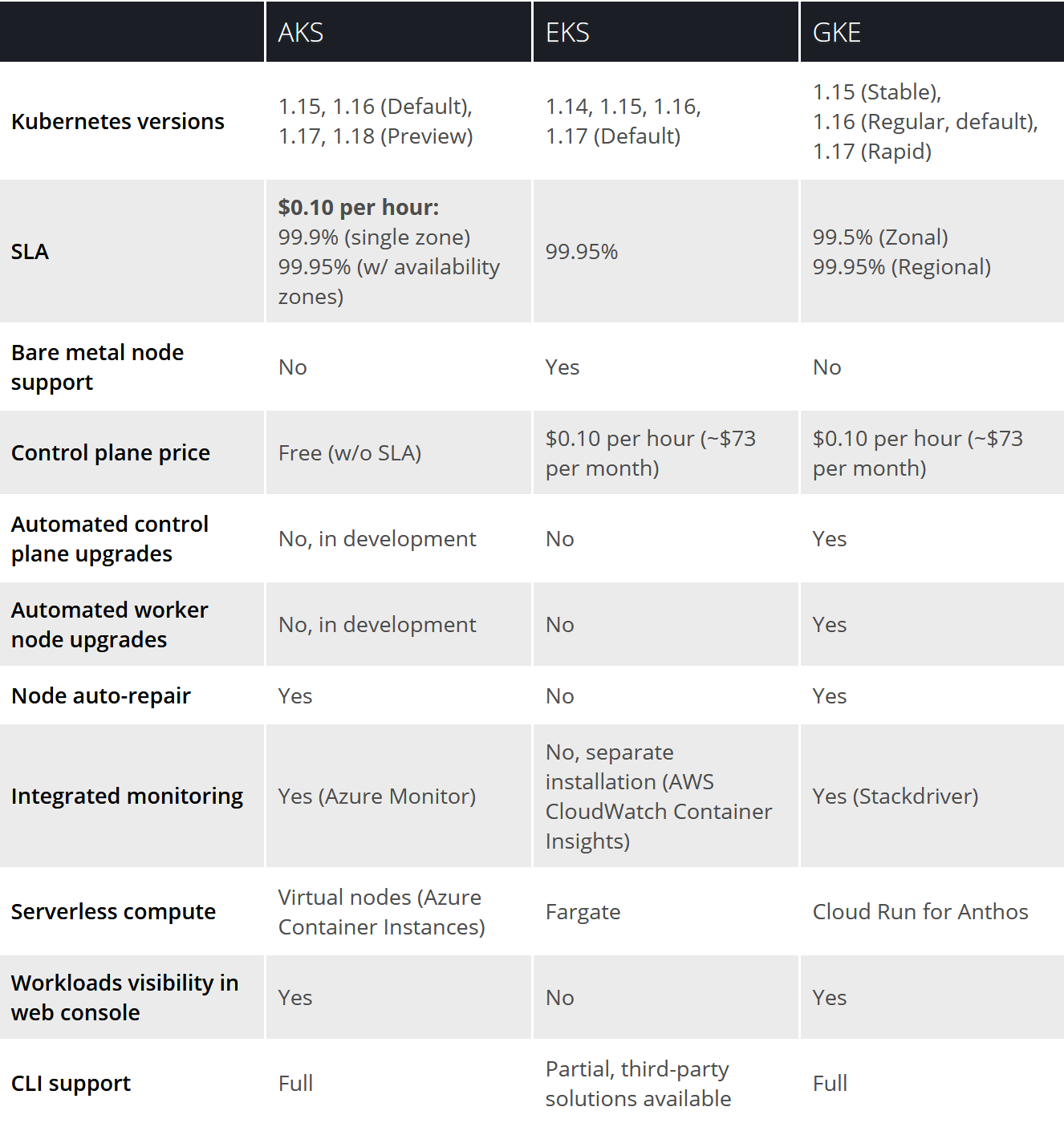

Feature Comparison

Management burden

As seen in the feature table above, GKE leads the way in how much it takes care of for you. Automated control plane and worker node upgrades, as well as node auto-repair, keep your cluster healthy without manual input. AKS supports node auto-repair but does not support automated upgrades (this is in development, according to Microsoft). EKS does not support any automated upgrades or node repair.

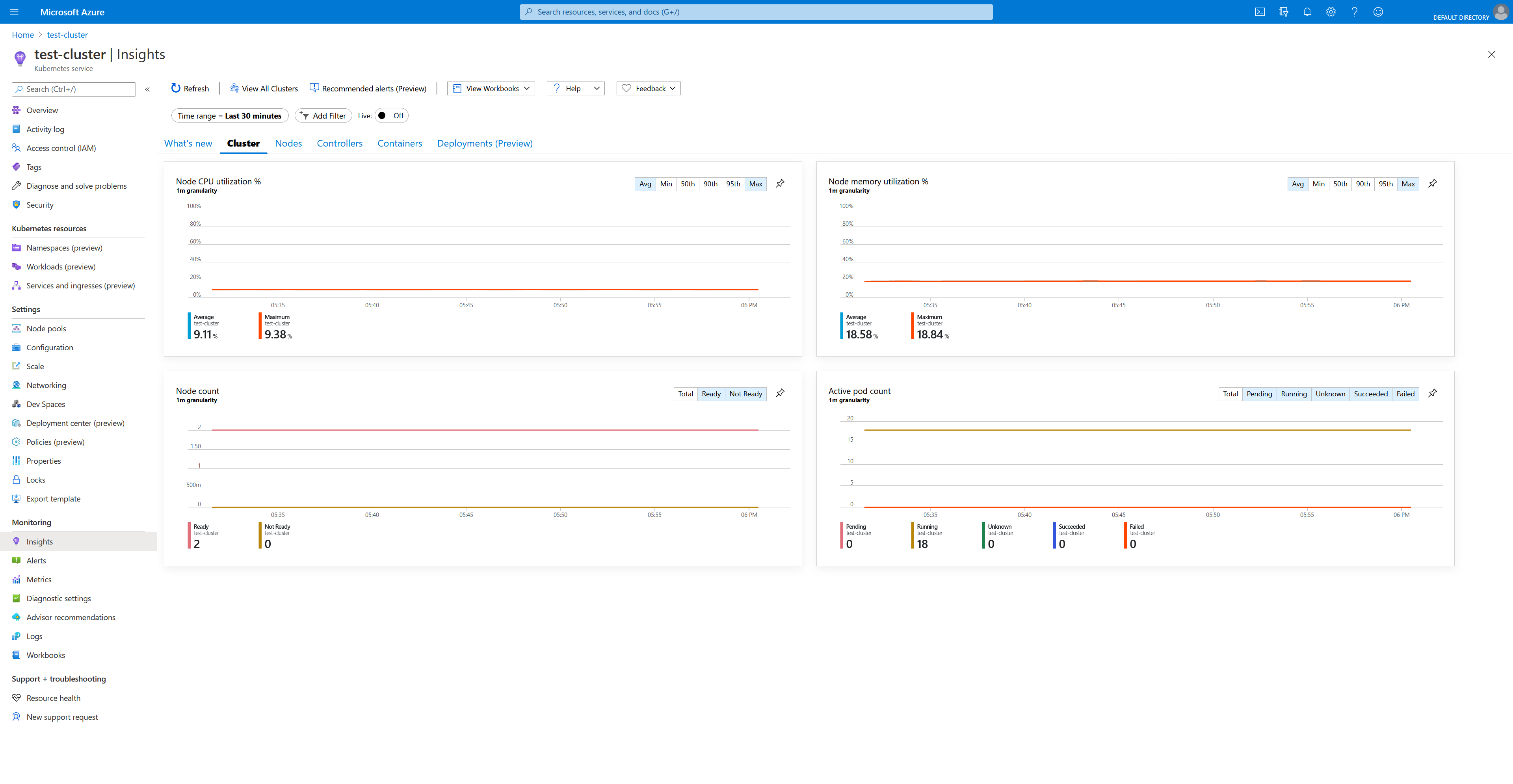

Monitoring

GKE and AKS both have direct integration with their platform’s respective monitoring tools. Both also have modern and well-designed interfaces that make looking through logs, seeing resource usage, and setting alerts very simple. GCP edges out Azure in having the most well-designed web interface overall, but Azure’s interface also works well enough.

EKS supports logging and monitoring as a separately installed feature known as CloudWatch Container Insights. Although the integration works well and offers comprehensive metrics, AWS’s CloudWatch interface is often frustrating to use, with a dated interface and a confusing layout. You might prefer setting up a third-party monitoring and logging solution instead.

Serverless compute

GKE offers a serverless compute feature known as Cloud Run for Anthos. This gives you all the usual benefits of the managed Cloud Run serverless container platform, including deploying highly scalable workloads that scale-to-zero with per-request scaling, while utilizing your own cluster’s resources rather than using Google’s managed Cloud Run infrastructure. This does not change how your usual Kubernetes workloads and deployments are run, however. It provides a different deployment option for workloads that will benefit from a serverless model.

AKS’s serverless feature is known as virtual nodes, allowing you to run Kubernetes pods backed by Azure Container Instances, rather than full virtual machines, allowing for faster and more granular scaling. Unlike Cloud Run for Anthos, this compute option runs your existing Kubernetes workloads rather than being an entirely separate deployment option. This means you can seamlessly adopt virtual nodes by targeting specific workloads to run on them.

EKS offers integration with Fargate, Amazon’s serverless container platform. Similar to AKS’s virtual nodes feature, this option allows you to run pods as container instances, rather than on full virtual machines. Fargate, however, requires the use of Amazon’s Application Load Balancer (ALB), while Azure’s virtual nodes implementation does not require you to use any specific load balancer.

Development tools

Google offers a feature called Cloud Code which is a VS Code or IntelliJ extension that allows you to deploy, debug and manage your cluster right from your IDE/code editor. This also includes direct integration with Cloud Run and Cloud Run for Anthos.

Microsoft offers similar functionality, with a Kubernetes extension within VS Code. Beyond this, however, AKS also has a unique feature known as Bridge to Kubernetes. This lets you run your local code as if it were a service within your cluster, allowing you run and debug your local code without having to replicate dependencies locally.

Both Google and Microsoft’s Kubernetes extensions support any Kubernetes cluster for generic functionality available with kubectl or the Kubernetes API, meaning you can use them with EKS clusters as well. However, there are no dedicated development tools for EKS that integrate beyond this generic functionality.

Summary

Google Kubernetes Engine (GKE)

By far the easiest-to-use and most feature-rich managed Kubernetes solution. If you have no particular allegiance to any cloud platform and you just want the best Kubernetes experience, look no further.

The fact that GKE sets the bar for managed Kubernetes is no surprise considering Kubernetes was designed by Google. GKE also had a nearly three-year head-start on its competitors — ample time to mature and gain features.

GKE offers a rich out-of-the-box experience that that gives you integrated logging and monitoring, with Google’s excellent Stackdriver ops tool and full visibility into your workloads and resource usage from the GCP web console. GKE’s CLI experience also offers you full control over your cluster configuration, making cluster creation and management remarkably simple. Simply put, GKE clusters are production-ready out-of-the-box with everything you need to immediately start deploying workloads.

With Google managing so much for you, you lose a little bit of control if you wish to fully customize your cluster. Still, beyond this, GKE is hard to fault. It’s the best managed Kubernetes experience, bar none.

Azure Kubernetes Service (AKS)

A great out-of-the-box experience with powerful development tools and quick Kubernetes updates. It’s the obvious choice for those already in the Microsoft/Azure ecosystem and a strong alternative to GKE for everyone else.

Although it doesn’t quite reach the heights of GKE, AKS has a great out-of-the-box experience with features like logging, monitoring, and metrics. A new Azure portal feature now gives you full visibility into your cluster workloads, although GKE still offers more comprehensive metrics and functionality. After a series of redesigns, Azure’s portal has gone from being a cluttered and confusing mess to a genuinely pleasant experience. Beyond the much-improved portal, AKS also has a strong CLI experience that gives you comprehensive control over your cluster. Clusters are easy to create and manage and are production-ready.

A downside with Azure as a platform is that it’s the least reliable of the three primary cloud providers. In terms of percentage uptime and sheer number of outages, Azure lags behind AWS and GCP. This doesn’t mean its unusable — plenty of big companies continue to rely on Azure — but it is something to keep in mind.

Besides some potential downsides, AKS remains a fantastic managed Kubernetes service. Although GKE is the better option for most, Azure has a few primary benefits. If you have an existing presence on Azure or use existing Microsoft tools like 365 or Active Directory, AKS is a natural fit. For everyone else, cheaper pricing with free control planes, fast Kubernetes updates, useful development tooling with VS Code and a seamless serverless compute option all mean that AKS is a strong offering worth considering.

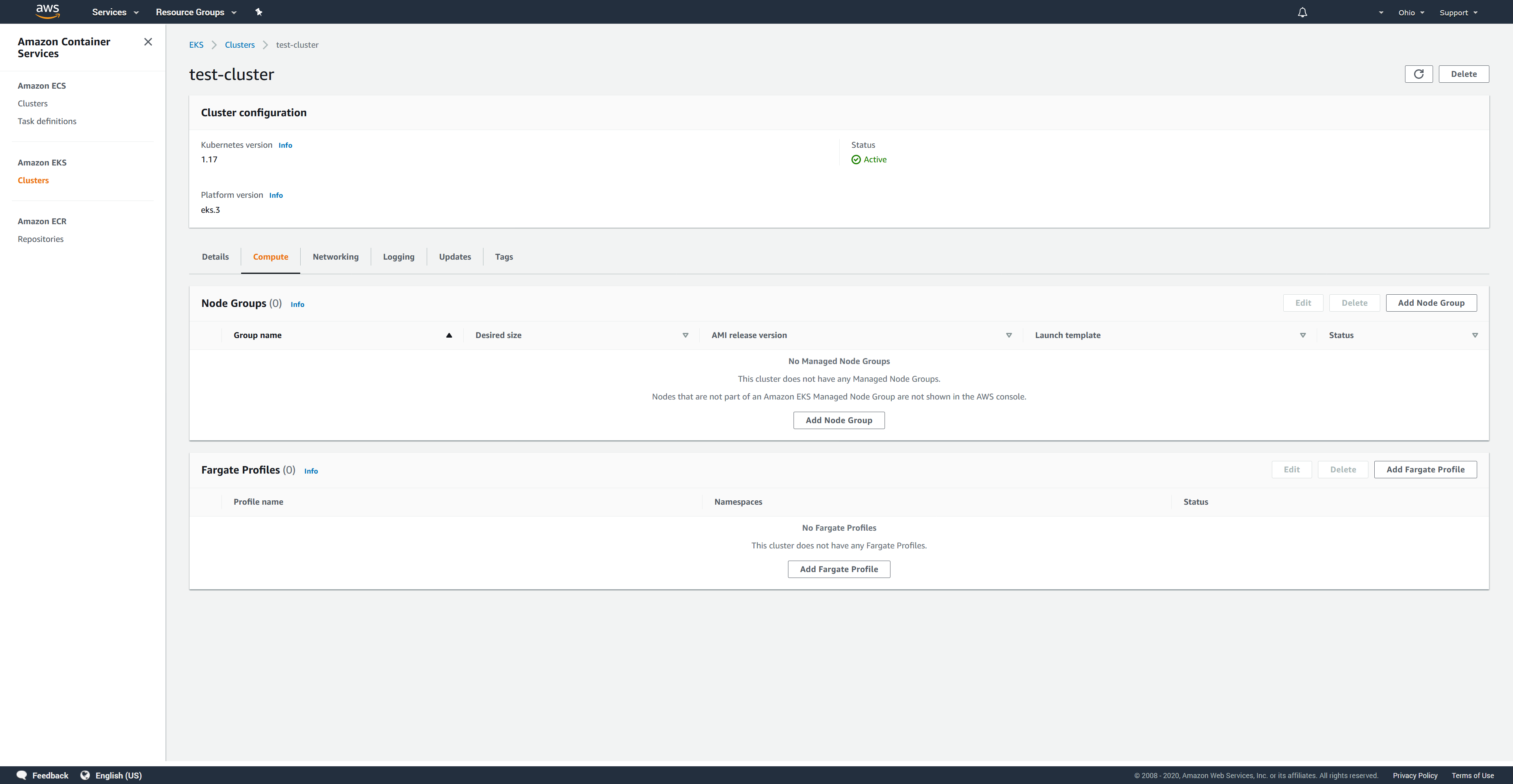

Amazon Elastic Kubernetes Service (EKS)

The weakest Kubernetes offering in terms of feature support, ease-of-use and out-of-the-box experience. Pick it if you must be on AWS or if you want the ability to fully control your Kubernetes cluster.

In contrast to the easy-to-use very managed approach GKE and AKS take, Amazon Elastic Kubernetes Service (EKS) leaves you to manage a lot of configuration yourself. You’ll spend a lot of time manually configuring IAM roles and policies, as well as installing various pieces of functionality yourself. You don’t get any visibility into your cluster or workloads by default. The EKS web interface as well as CLI are sparse and extremely limited to a handful of operations.

Third-party tools like the terraform-aws-eks Terraform module and the eksctl command-line tool fill in a lot of the frustrating gaps with EKS’s management experience. Both automate and abstract a fair bit of cluster creation and management complexity. eksctl, specifically, provides the fully-featured CLI experience that AWS does not give you. However, even these tools can only go so far. Because this functionality isn’t native to EKS, even if these tools abstract some complexity, you’re still ultimately responsible for maintaining it.

EKS does work very well if you want more control over your cluster. By being relatively hands-off on the management front, you also get a clean slate to fully customize whatever you need to, if you’re so inclined. EKS is also the only service here with bare metal node support and support for bringing your own machine images.

Ultimately, EKS’s greatest strength is that it’s an AWS service. It lives within one of the strongest and most mature cloud platforms with rock-solid reliability, a wide range of very popular services and a massive developer community.

Conclusion

If you’re looking for the best Kubernetes experience, GKE is by far the best option. You have the best out-of-the-box experience, extensive feature support, effortless maintenance, and excellent web and CLI interfaces.

AKS takes a strong second place here with a great out-of-the-box experience, a powerful serverless compute feature and useful development features with VS Code, all offered with a free control plane.

EKS, on the other hand, is very hands-off in its approach, which is great if that’s what you’re looking for. For most though, if you choose EKS you’re doing so for the strength of AWS and not EKS itself.